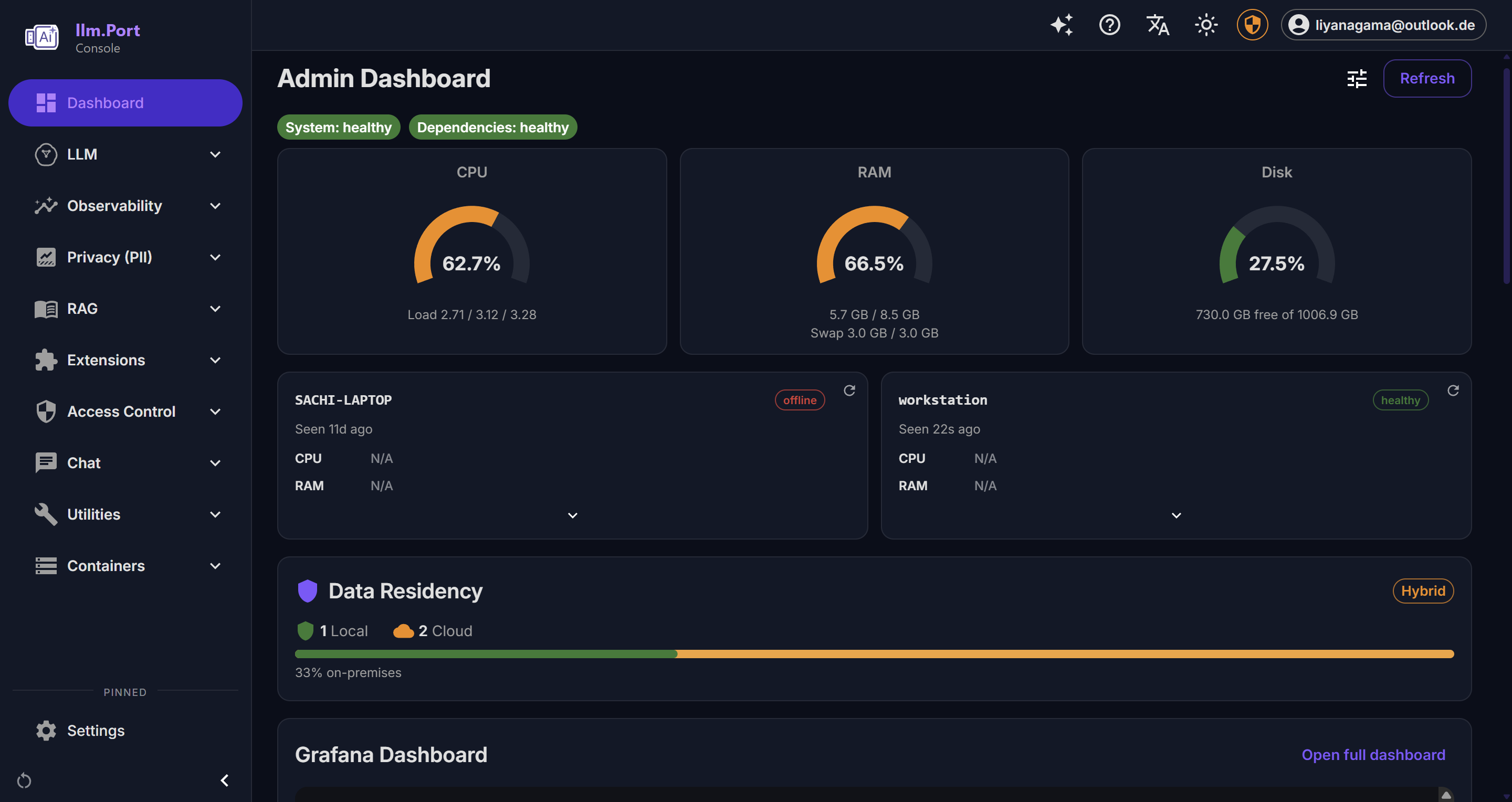

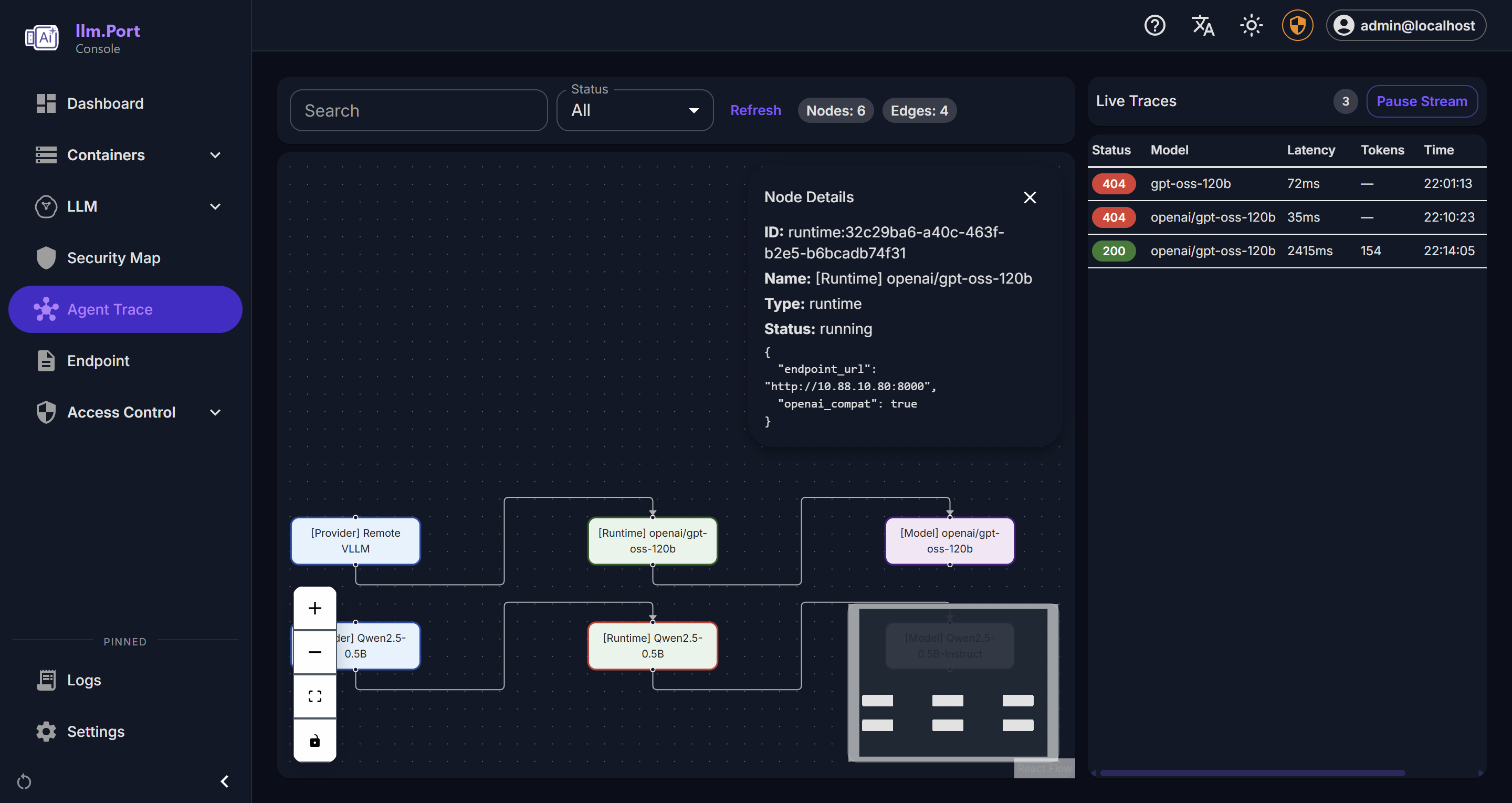

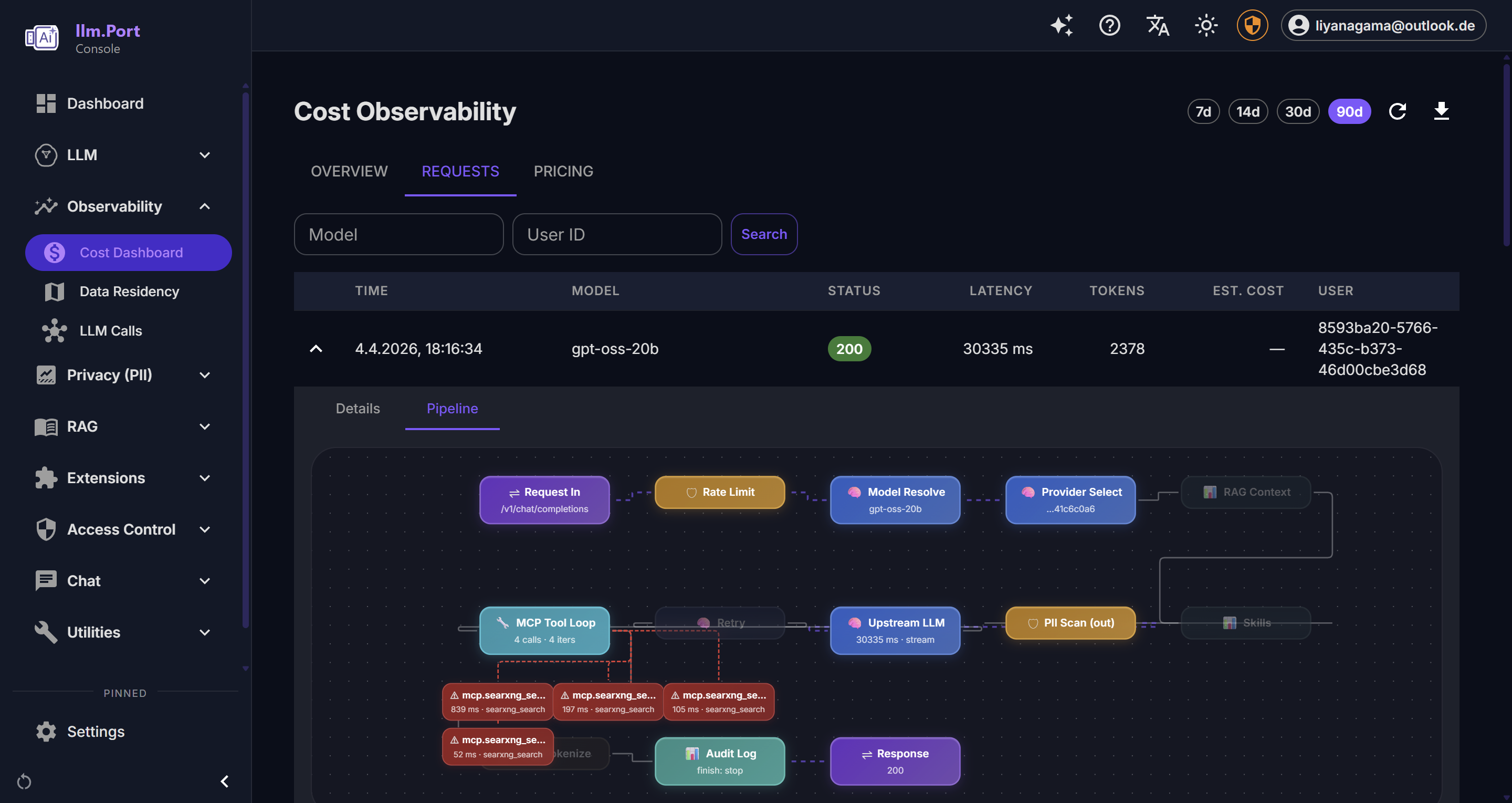

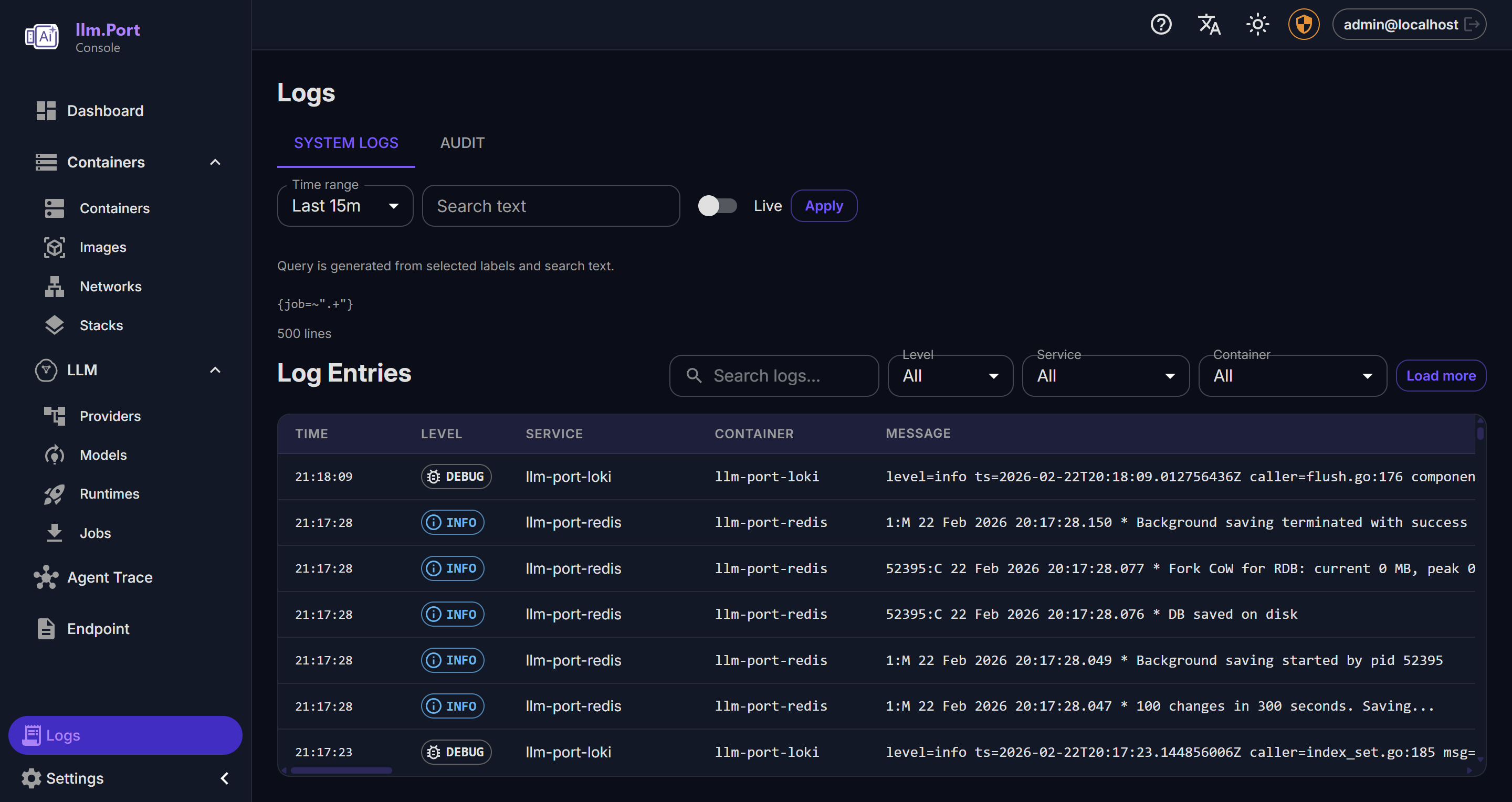

Observability

llm.port te ayuda a entender rapidamente lo que pasa en tu operacion AI del dia a dia.

Puedes responder preguntas como:

- Las solicitudes estan estables y rapidas?

- Que modelos se usan mas?

- Donde sube el costo?

- Quien cambio que cosa y cuando?

What you can observe

- Request activity and outcome trends

- Latency and throughput indicators

- System health and service behavior

- Administrative action trails

Why this matters

- Faster incident detection and troubleshooting

- Better governance and compliance reporting

- Data for capacity planning and optimization

Para muchos equipos, este panel se vuelve la fuente principal de verdad operativa.

Recommended operating practice

- Define alert thresholds for key service indicators

- Review usage and access trends regularly

- Keep retention policies aligned with compliance requirements

En la pestana de requests normalmente puedes investigar problemas de usuarios mas rapido.

Public docs focus on observable outcomes and operating guidance, not internal telemetry plumbing.

Screenshots