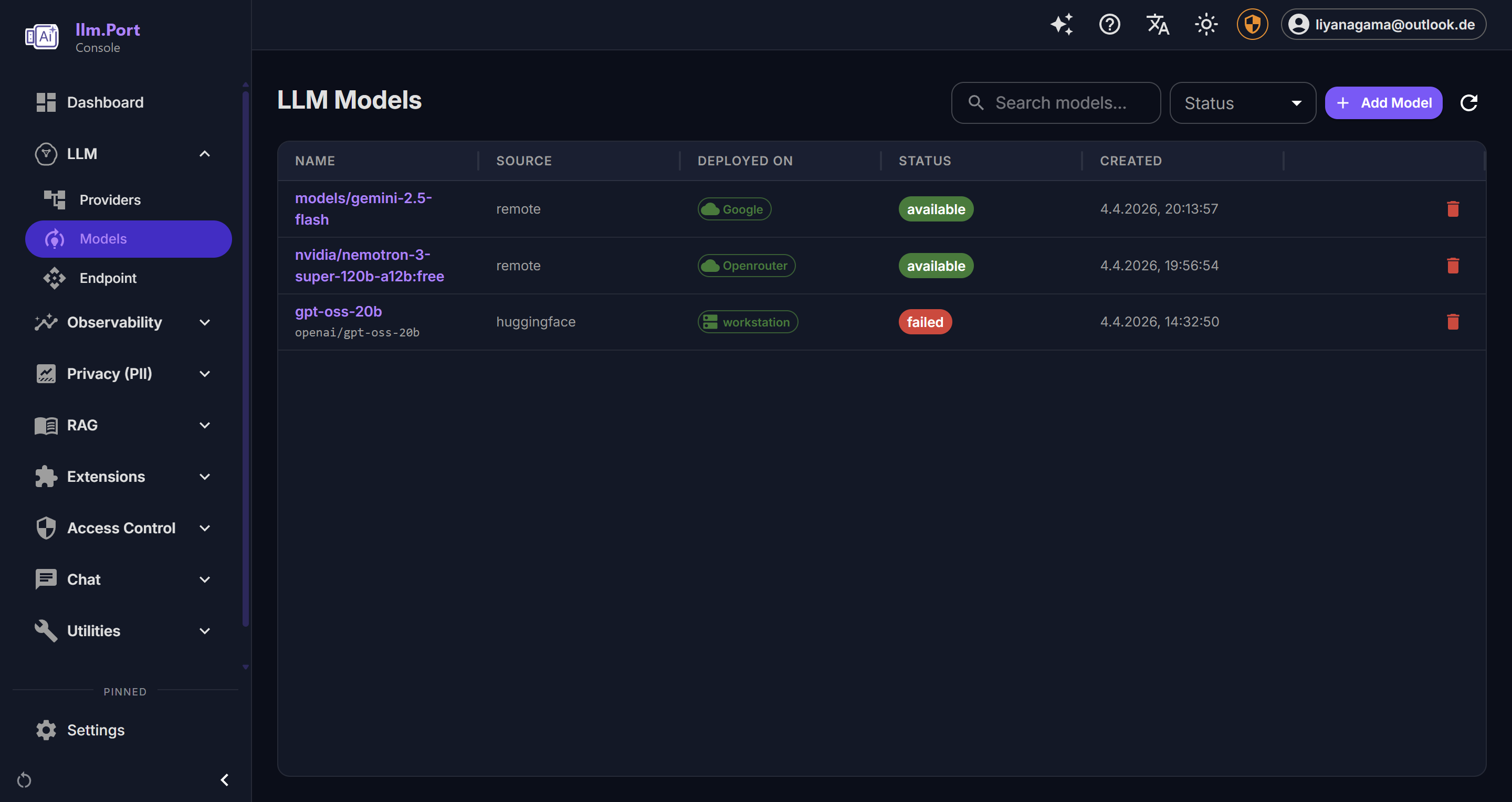

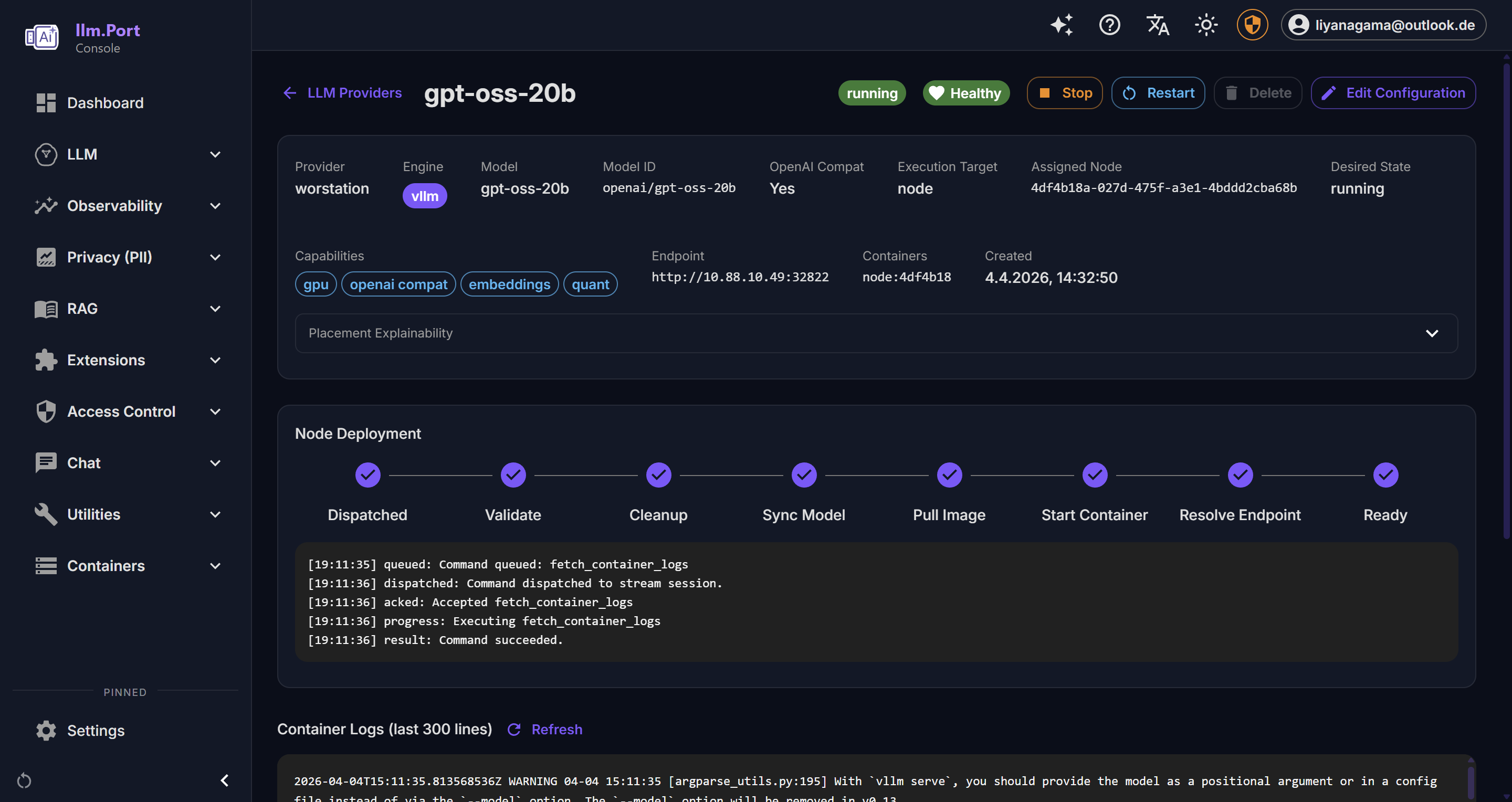

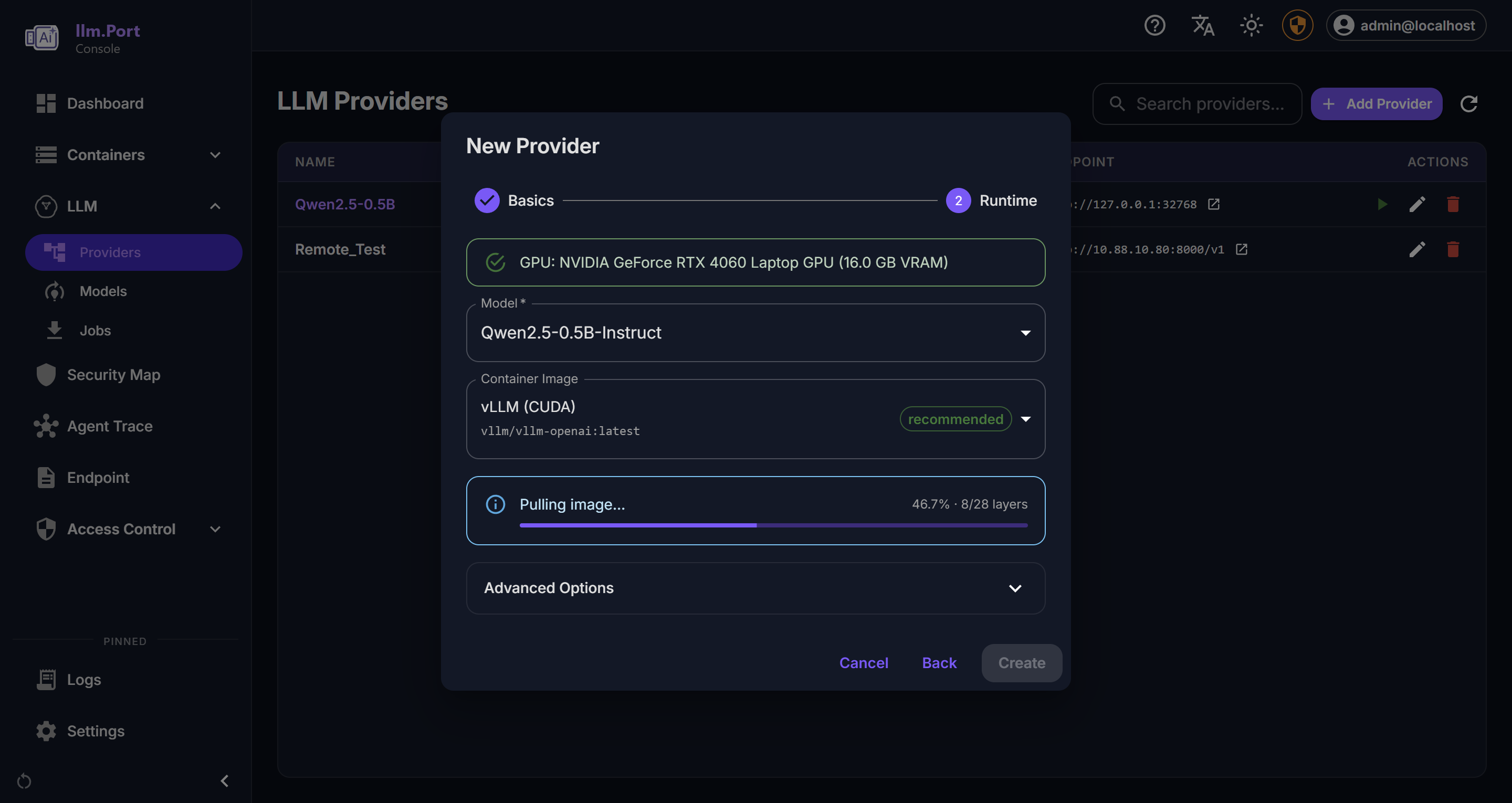

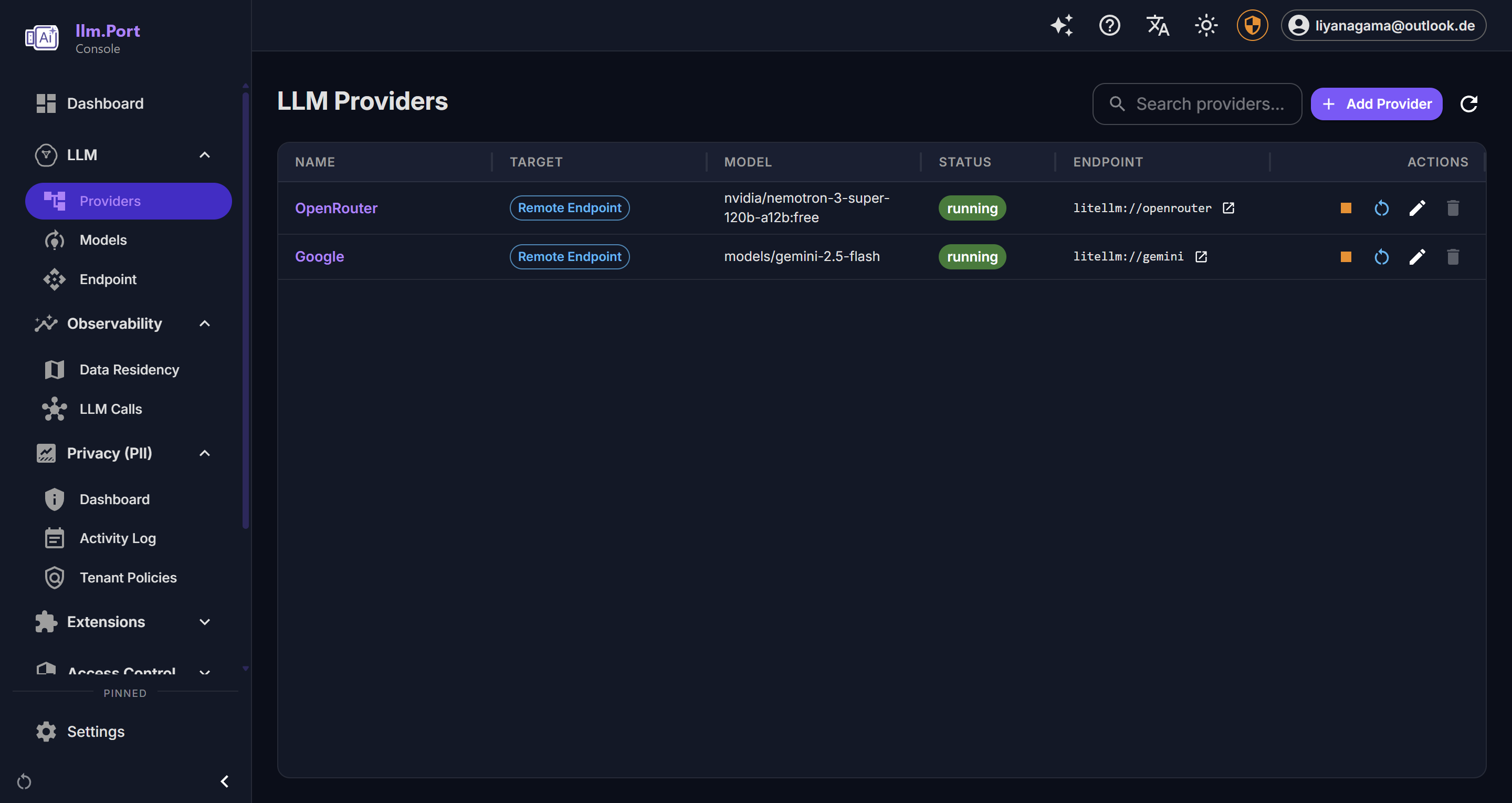

Runtime Management

llm.port lets teams manage local runtimes and remote providers from one platform.

Supported operating model

- Local runtime hosting for data-sensitive workloads

- Remote provider usage for elasticity and model breadth

- Hybrid operation with routing control by model alias

Operator benefits

- Unified configuration and monitoring workflows

- Easier runtime comparison and migration decisions

- Better control over performance and cost posture

Rollout recommendations

- Define approved runtime/provider options

- Standardize alias naming per environment

- Validate model behavior before production cutover

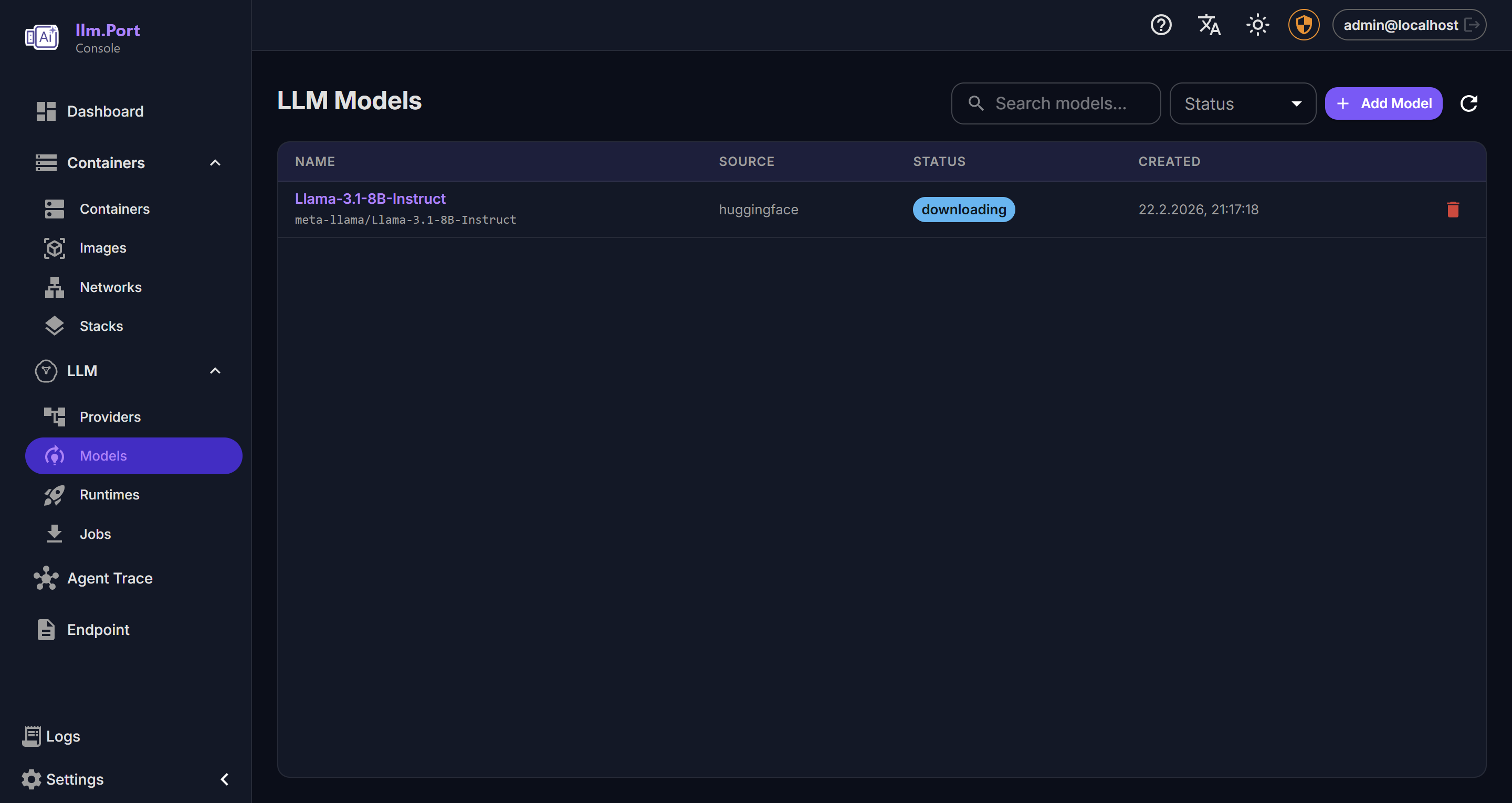

Screenshots