RAG Workflows

llm.port supports Retrieval-Augmented Generation (RAG) for document-grounded responses.

What RAG enables

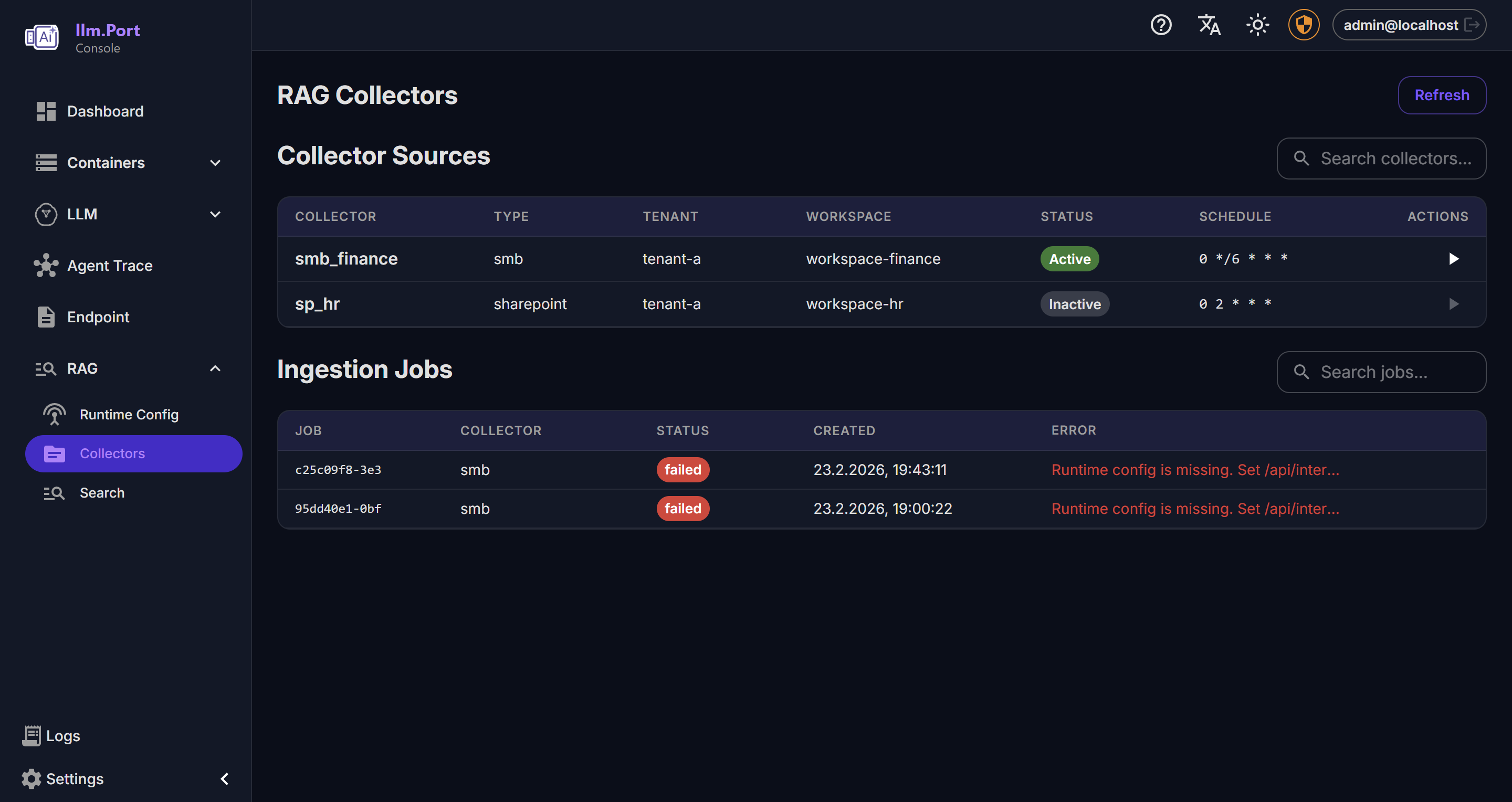

- Ingest enterprise documents

- Search relevant context at query time

- Improve answer quality with controlled knowledge sources

Typical lifecycle

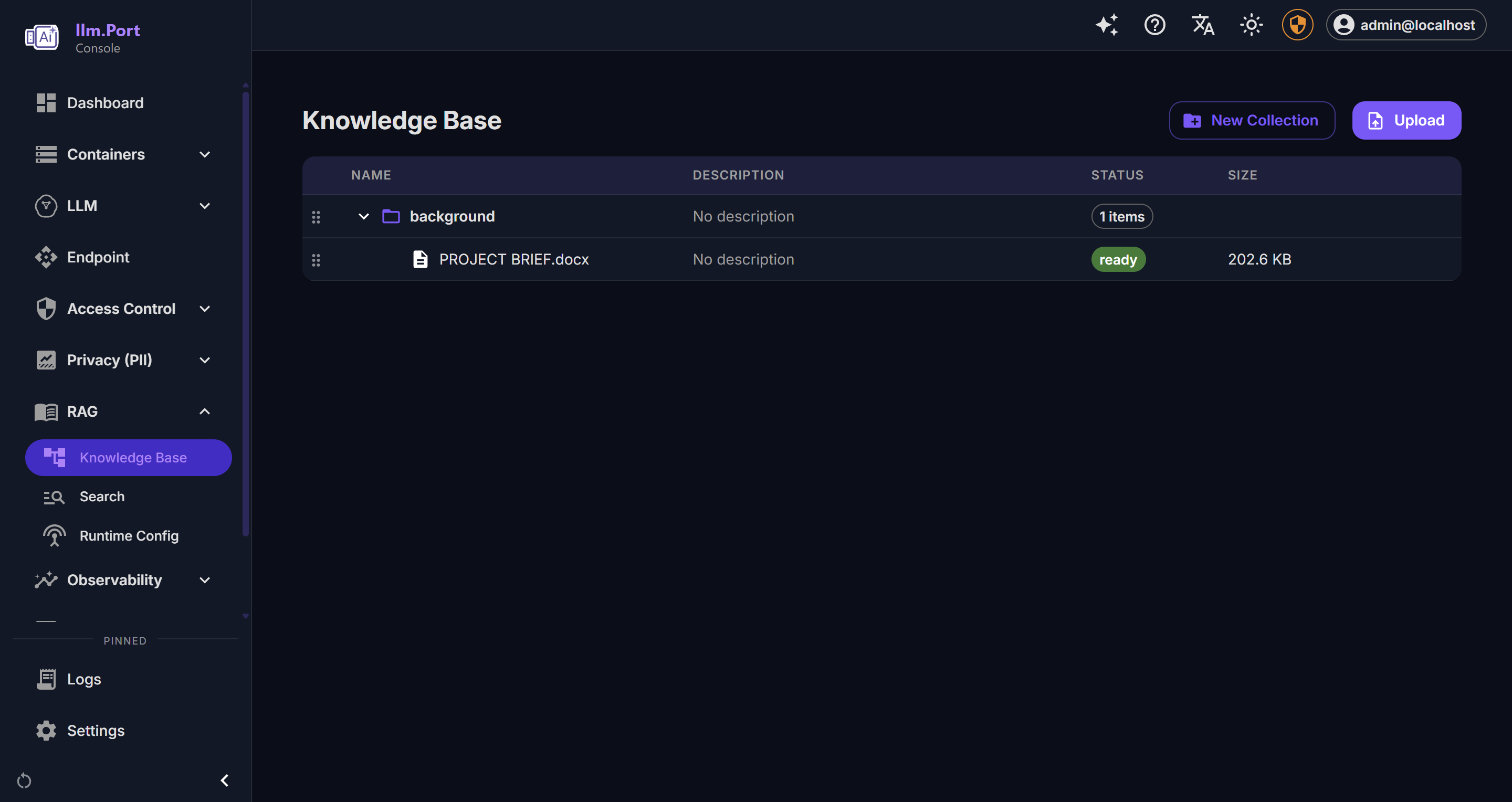

- Upload and organize source documents

- Publish or activate knowledge for use

- Query through the Gateway or chat experiences

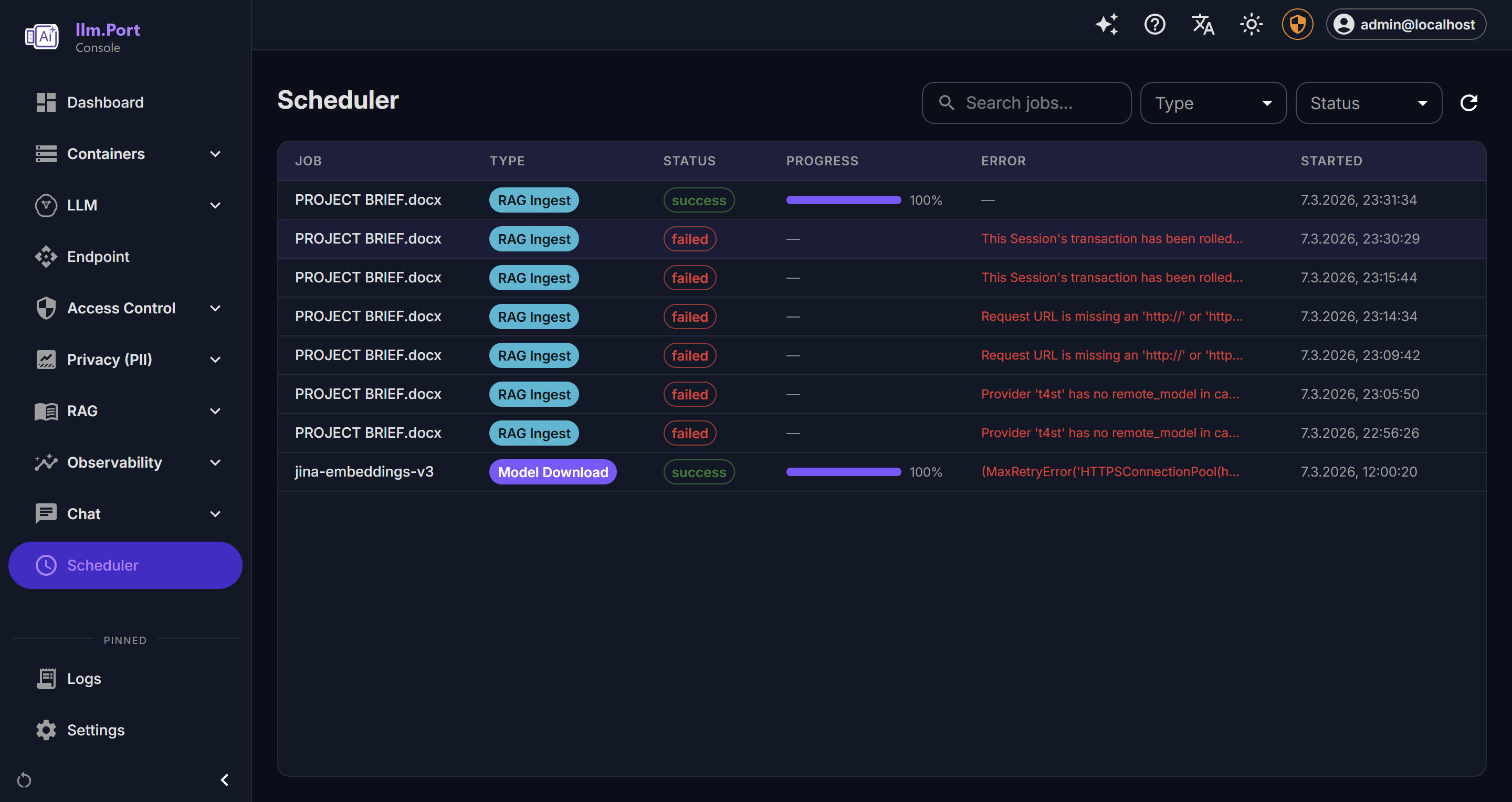

- Monitor usage and quality outcomes

Public deployment guidance

- Start with a focused knowledge scope

- Define content ownership and refresh cadence

- Validate permission boundaries before broader rollout

Notes

The public docs describe RAG at capability level. Deep indexing and processing internals remain internal.

Screenshots