What is llm.port?

llm.port is a self-hosted AI workspace that helps teams run LLM features safely and confidently.

In simple terms, it gives you one place to:

- connect models (local or hosted)

- manage who can do what

- protect sensitive data

- see usage, quality, and cost in one dashboard

What you can do with it

- Launch a model and expose it to your apps quickly

- Keep governance in place without slowing teams down

- Track requests, errors, and spend without extra tooling

- Add capabilities like RAG or PII controls when you need them

Who it is for

- Teams that need AI in production, not just demos

- Security-conscious organizations that must keep control of data

- Product teams that want to ship AI features faster

Why teams choose llm.port

- Faster rollout: from install to first request in minutes

- Safer operations: policy controls, audit visibility, and privacy guardrails

- Flexible setup: use local models, hosted providers, or both

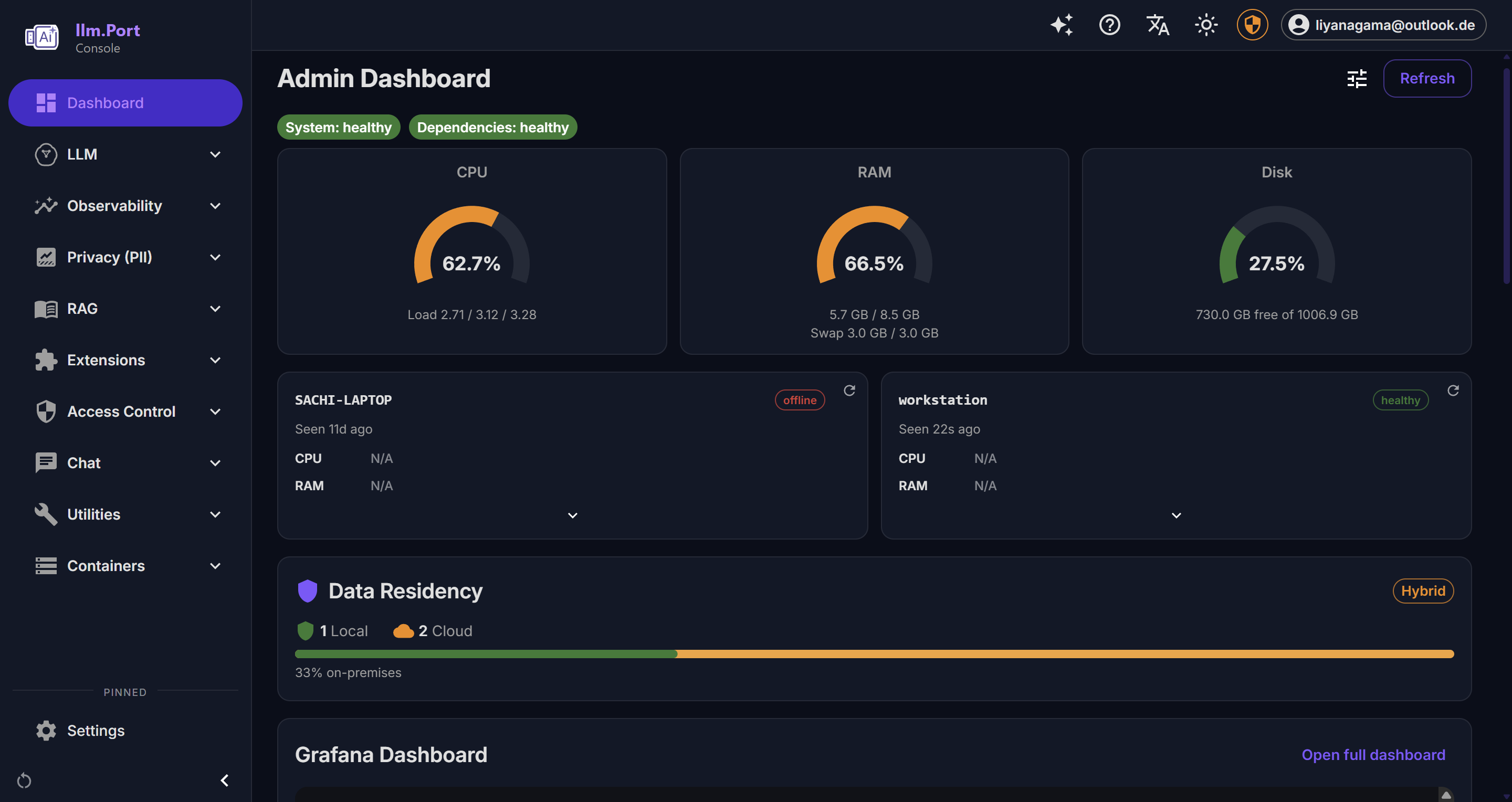

A quick visual

Next steps

- Start with Quickstart

- Review Platform Overview

- Integrate via API Gateway